Predicting NBA Scores Part 3

Improving some straightforward priors

In our last post, we looked in depth at a single prior and how to align it with our expectations. Specifically, we looked at home field advantage where developing a prior model was fairly formulaic: decide which values would be ridiculous (in this case, negative home field advantage or greater than 5 points home field advantage) and then set our prior to have 99% of density between these bounds.

In this post, we’ll apply this formula to some more priors and see when the method breaks down. In the following post, we’ll overcome the limitations of this simple formula.

At the bottom of this post, our Stan model was rewritten to get the entire distribution for every prior. Looking at those distributions, we see our old friend home field advantage. You can see the bounds we introduced last time: A hard threshold at 0 points, and 99% of the density falling below 5 points.

Prior modeling offensive strength

Let’s look at another prior: average team offensive strength. This parameter essentially describes the points per game of an average NBA team.

Here’s the prior we used:

theta_offense_bar ~ normal(116, 10);We used our old lazy trick: set the mean to something reasonable and set the variance large to give our laziness some breathing room.

But this prior ends up being pretty unreasonable. Do you really expect an average team to score 95 points a game? What about 135? Let’s tighten up our prior to match our expectations. Following the same formula from last time: what values would be completely unreasonable? Once we define those, put 99% of our prior density between those values.

So what values are ridiculous for an average team’s points per game? In the last twenty years of data, it would look extremely unlikely for an average team to score less than 90 points or more than 130 points:

A prior with 99% density between 90 and 130 corresponds to:

theta_offense_bar ~ normal(110, 6.67);Comparing this to our old priors looks much better:

Prior modeling the variance in offensive strength

There’s another parameter in our model that describes how much variation in offensive strength there is across teams. If this parameter is 0, all teams have the same offensive strength. If this value is really high, teams have wildly different offensive strengths. Here’s the prior model we used:

real<lower=0> sigma_offense_bar;

sigma_offense_bar ~ cauchy(0, 5);A half-cauchy centered on 0. Why cauchy? Cauchy is far heavier-tailed than the normal distribution. When we set 99% of the density to align with our expectations, the other 1% is very flat and extends well beyond our reasonable thresholds. Effectively, it says: “if we’re wrong about our thresholds, go nuts. The true might be anything”. As opposed to the more moderately-tailed normal distribution that says: “if we’re wrong about our thresholds, we’re still probably pretty close.”

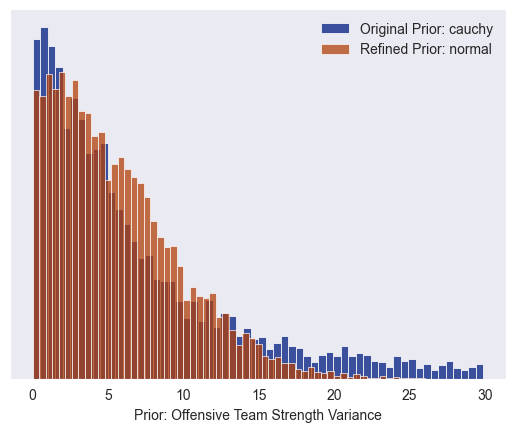

Here’s what the half-cauchy prior model looks like for the variation of offensive strength across teams:

You can see how heavy-tailed this prior model is: once you get to about 20, the distribution if very flat. The x axis is clipped at 30, but it keeps extending well past 30. How heavy-tailed is it really? If you take 4,000 draws from the prior model, these are the most extreme values you’ll see:

419,159?! There’s 9 draws over 1,000, and 115 samples over 100. These are very extreme parameter values! Our prior model is saying: the variance across teams is probably somewhere less than 10, but if it’s more than 10, it can be anything!

These extreme values are obviously unreasonable. To suppress the unreasonably large values, we can switch to the more moderately-tailed normal distribution:

You can see most of the density is contained in the same region between zero and ten. Above ten, the cauchy distribution is much flatter, however.

Now let’s run our old formula: what is a reasonable bound on the offensive team strength variance parameter? Once we define those bounds, we can put 99% of our moderately-tailed normal distribution between those bounds. Unfortunately, this makes it obvious when this plug-and-chug formula breaks down. Unlike home field advantage, which everyone has intuition about, this is a more abstract parameter that is harder to pin down with your gut. In the next post, we’ll figure out what to do about that.

The Stan Model

To get all the prior distributions, I moved the entire prior model into the generated quantities block:

// Heirarchical IRT regression

//

// This models the points of home and away teams

// as a function of the latent offensive and defensive

// strength of the teams.

//

// Specifically, this version tries to improve on the

// prior modeling.

data {

// Number of games

int<lower=1> N_games;

// Number of teams in the league

int<lower=1> N_teams;

// Home and away points scored in each game

array[N_games] int<lower=0> home_points;

array[N_games] int<lower=0> away_points;

// Team index for each game

array[N_games] int<lower=1, upper=N_teams> home_team;

array[N_games] int<lower=1, upper=N_teams> away_team;

// Threshold for home field advantage

// 99% of the density should be between 0 and this value

real home_field_advantage_threshold;

}

transformed data {

// Section 3.3.1 https://betanalpha.github.io/assets/case_studies/prior_modeling.html

real home_field_advantage_prior_sigma = home_field_advantage_threshold / 2.57;

}

generated quantities {

// Prior Modeling

// Average strength of the teams

real theta_offense_bar;

theta_offense_bar = normal_rng(116, 10);

// Home field advantage

// Put 99% of dennsity between 0 and input {home_field_advantage_threshold}

real <lower=0> home_field_advantage;

home_field_advantage = abs(normal_rng(0, home_field_advantage_prior_sigma));

// Variations of the teams strength

real<lower=0> sigma_offense_bar;

real<lower=0> sigma_defense_bar;

sigma_offense_bar = abs(cauchy_rng(0, 5));

sigma_defense_bar = abs(cauchy_rng(0, 5));

// Individual team strength

real theta_offense;

real theta_defense;

theta_offense = normal_rng(theta_offense_bar, sigma_offense_bar);

theta_defense = normal_rng(0, sigma_defense_bar);

// Gaussian noise in the points

real<lower=0> sigma_points;

sigma_points = abs(cauchy_rng(0, 5));

real home_points_regression = home_field_advantage + theta_offense + theta_defense;

real away_points_regression = theta_offense + theta_defense;

real home_points_sample = normal_rng(home_points_regression, sigma_points);

real away_points_sample = normal_rng(away_points_regression, sigma_points);

}

I love these posts - and I don't even like basketball! How much support do I need to pledge to connect offline? Can we connect on LinkedIn?

Really enjoying these walk throughs. Would it be ok to use some of the Stan code (with attribution of course) for a Wizards specific post?